Residual neural network

They even experiment with dropouts in-between the convolutional layers which have their own potential.įigure 3. What does increasing the width mean? For each convolutional layer in the residual block, they add more kernels. They take the same residual block concept from the original ResNets and try to increase the width of each block. They try to decrease the depth of the networks and increase the width. In the paper, they propose a new way of using the residual blocks for building Residual Neural Networks. With the above two problems to tackle, Sergey Zagoruyko and Nikos Komodakis came up with Wide Residual Neural Networks. Introduction to Wide Residual Neural Networks The above two problems directly tell that just building deeper networks even with residual blocks will not help. And even may not be helpful for transfer learning.

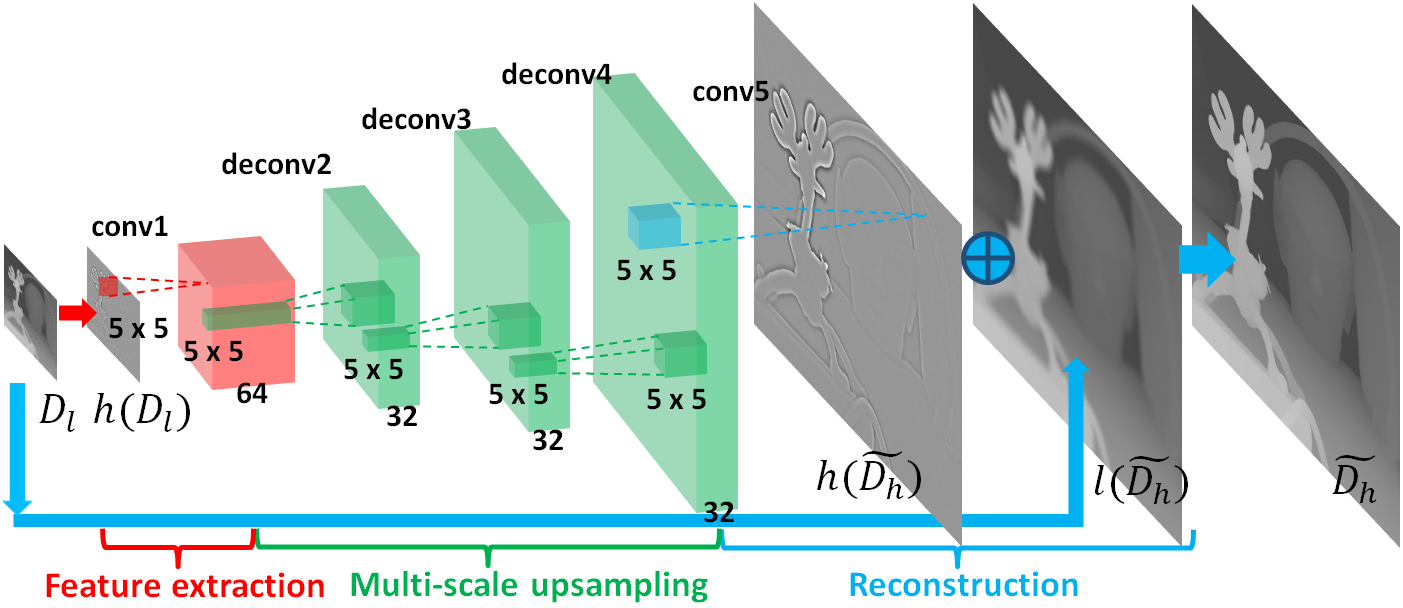

This means that they may not be just as fast to converge. Very deep residual networks (those having thousands of layers) have a dimishing return for feature reuse.Each fraction of improvement in performance costs double the number of layers.There came a point where deeper residual blocks give diminishing results.Īs mentioned in the Wide Residual Network, when a ResNet is thousands of layers deep, there are a few problems we face. This means they perform better in transfer learning in comparison to other networks of the same scale and number of parameters.īut the main focus to improving the accuracy of ResNets was by adding more and more residual blocks to increase the depth of the network. ResNets used in image classification, object detection, and image segmentation.īecause of the residual blocks, ResNets were able to show better generalization capabilities. And hence, we will have a thorough discussion of the paper in this post.įigure 2. Although the paper has a decent number of citations, still, many newcomers in deep learning may not get exposure to this paper. After publication, the paper has gone under a few revisions, and the current one is version 4 published in June 2017. Wide Residual Neural Networks were introduced in the paper Wide Residual Networks by Sergey Zagoruyko and Nikos Komodakis. To counter this issue and build even more efficient residual networks, now we have Wide Residual Neural Networks or WRN for short. And this is where we need to take a look at something new. There are diminishing returns after a point. But even just stacking one residual block after the other does not always help. Because of the residual blocks, residual networks were able to scale to hundreds and even thousands of layers and were still able to get an improvement in terms of accuracy. The residual blocks were very efficient for building deeper neural networks. When the original ResNet paper was published, it was a big deal in the world of deep learning. We will go through the paper, the implementation details, the network architecture, the experiment results, and the benefits they provide. Also known as WRNs or Wide ResNets for short. In this post, we will go in-depth into the understanding of Wide Residual Neural Networks.